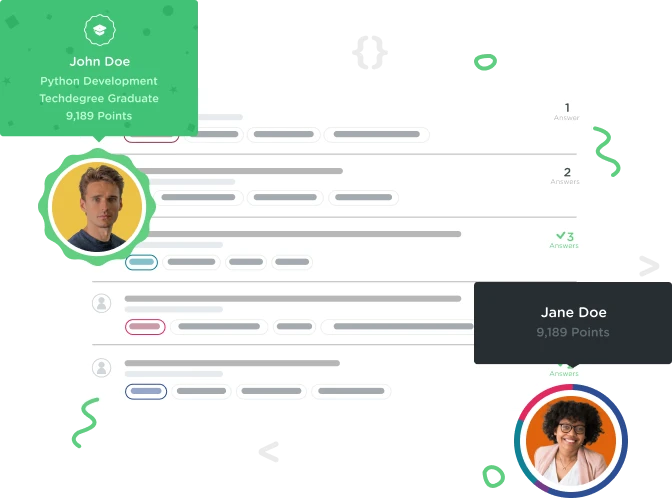

Welcome to the Treehouse Community

Want to collaborate on code errors? Have bugs you need feedback on? Looking for an extra set of eyes on your latest project? Get support with fellow developers, designers, and programmers of all backgrounds and skill levels here with the Treehouse Community! While you're at it, check out some resources Treehouse students have shared here.

Looking to learn something new?

Treehouse offers a seven day free trial for new students. Get access to thousands of hours of content and join thousands of Treehouse students and alumni in the community today.

Start your free trial

Andreas cormack

Python Web Development Techdegree Graduate 33,011 PointsLoad large csv files into a mysql database

I have written code at the moment that loops through a csv file and inserts each row into a table but this is not the best approach when the file is large as the system has to run thousands of insert statements. Any one any ideas as to how this can be done?? I have tried the LOAD DATA INTO statement but that keeps throwing erros

2 Answers

miguelcastro2

Courses Plus Student 6,573 PointsUse "mysqlimport" command. It works fast and is suited for large CSV files.

mysqlimport --ignore-lines=1 \

--fields-terminated-by=, \

--local -u root \

-p Database \

TableName.csv

Andreas cormack

Python Web Development Techdegree Graduate 33,011 PointsThanks MIguel I got everything working fine but hit another problem. Your ok if you just run normal select statements but when you try and run any statement with a where clause mysql returns the same record twice. My project reached a dead end as the hosting providers don't allow you to use the mysql import command for security reasons as apache needs settings changed for it to work. Thanks anyway