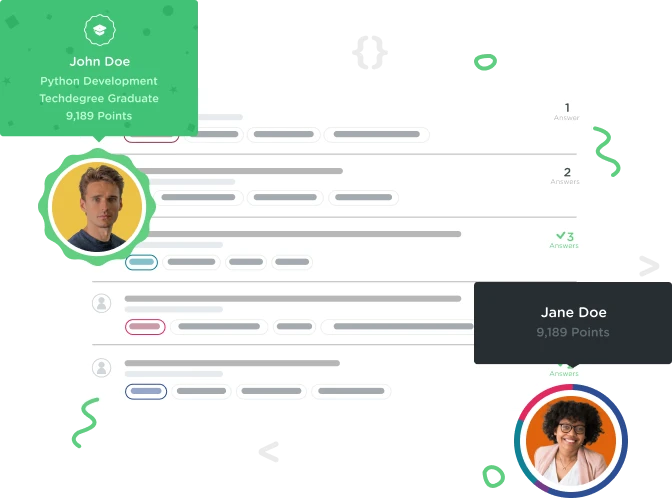

Welcome to the Treehouse Community

Want to collaborate on code errors? Have bugs you need feedback on? Looking for an extra set of eyes on your latest project? Get support with fellow developers, designers, and programmers of all backgrounds and skill levels here with the Treehouse Community! While you're at it, check out some resources Treehouse students have shared here.

Looking to learn something new?

Treehouse offers a seven day free trial for new students. Get access to thousands of hours of content and join thousands of Treehouse students and alumni in the community today.

Start your free trial

Allan Pedersen

2,421 PointsMaximum times of for looping

Is there a best practice around for looping?

I'm doing a web scraper and I'm currently at my fourth for loop within the the first one. Perhaps someone can give some guidance in a better way of doing this.

#! usr/bin/env python3

from pymongo import MongoClient

import urllib.request

from bs4 import BeautifulSoup

import time

# connects to the Mongo DB

def open_db():

client = MongoClient('localhost:27017')

db = client.homeDepot

return db

# Url scaffolding variable(s) for finding data

base_url = "http://www.arla.dk"

# Function for capturing the response and parsing of data from each url

def url_souping(url):

url_response = urllib.request.urlopen(base_url + url)

url_parsed = BeautifulSoup(url_response, "html.parser")

return url_parsed

def recipe_soup(url):

url_response = urllib.request.urlopen(base_url + url)

url_parsed = BeautifulSoup(url_response, "html.parser")

return url_parsed

start_time = time.time()

url_parsed = url_souping("/opskrifter/madplaner")

for link in url_parsed.find_all('option'):

ids = (link.get('value')) # Gets the week number ids

url_parsed = url_souping("/opskrifter/madplaner/?weeklymenuid=" + str(ids))

for meal_day in url_parsed.select("[class~=recipes-for-the-day] a[href]"):

urls = (meal_day.get('href'))

url_parsed = url_souping(urls)

for recipes in url_parsed.select('[id~=recipe-media]'):

recipe_id = recipes.get('data-recipe-id')

url_parsed = recipe_soup("/WebAppsRecipes/Ingredients/UpdateServings?recipeId=" + recipe_id + "&amount=1")

print(url_parsed)

for details_recipes in url_parsed.select('[class~=ingredients-list]'):

print("--- %s seconds ---" % (time.time() - start_time))

Allan Pedersen

2,421 PointsPoint taken, Steven. :) Updated with current state of code. Sorry for the lack of comments, hope it's readable.

1 Answer

Steven Parker

243,656 PointsAt first glance it appears that your loop nesting is in accordance with element nesting in the data you are investigating, which seems appropriate to the task, assuming the structure of the data is reliable. It might be a bit easier to follow if you used a different name for each level instead of re-using url_parsed, though.

Other methods I can think of that would reduce the nesting still wouldn't reduce the iterations so I don't know that there would be any benefit.

Maybe a more experienced Python'er (especially if they are familiar with those libraries) might have some other comments.

Steven Parker

243,656 PointsSteven Parker

243,656 PointsIt might help if you share your code. Remember to blockquote it.

Even better, make a snapshot of your workspace and post the link to it here.