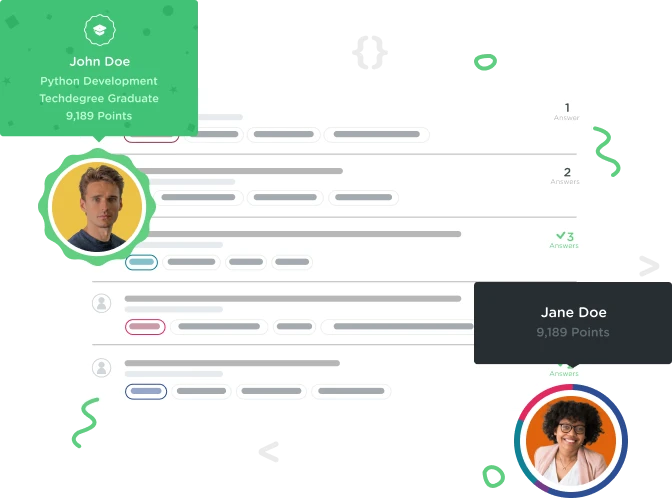

Welcome to the Treehouse Community

Want to collaborate on code errors? Have bugs you need feedback on? Looking for an extra set of eyes on your latest project? Get support with fellow developers, designers, and programmers of all backgrounds and skill levels here with the Treehouse Community! While you're at it, check out some resources Treehouse students have shared here.

Looking to learn something new?

Treehouse offers a seven day free trial for new students. Get access to thousands of hours of content and join thousands of Treehouse students and alumni in the community today.

Start your free trial

Jonathan Kuhl

26,133 PointsNot getting any data at all from spider

I've been following the video, I made my spider, I made almost no changes to the code and I got no results from the spider. I got a report saying zero webpages were crawled, despite the urls being copied and pasted from the webpages Treehouse provided:

import scrapy

class HorseSpider(scrapy.Spider):

name = 'ike'

def start_request(self):

urls = [

'https://treehouse-projects.github.io/horse-land/index.html',

'https://treehouse-projects.github.io/horse-land/mustang.html'

]

return [scrapy.Request(url=url, callback=self.parse) for url in urls]

def parse(self, response):

url = response.url

page = url.split('/')[-1]

filename = 'horses-%s' % page

print('URL: {}'.format(url))

with open(filename, 'wb') as file:

file.write(response.body)

print('Saved as %s' % filename)

What am I missing?

2 Answers

Trevor J

Python Web Development Techdegree Student 2,107 PointsCheck def start_request(self): it should be def start_requests(self):

jessechapman

4,088 PointsI make the same error as the initial poster (defining a "start_request" function). Changing it to start_requests worked for me.

Mark Chesney

11,747 PointsMark Chesney

11,747 PointsThat's just a convention. Yes, you can call it

start_requestorstart_requestsbut you do have to be consistent. Good pointing out this detail though, Trevor!Mark Chesney

11,747 PointsMark Chesney

11,747 PointsActually now that I've reached the end of the video, and @kenalger says "we need

start_requestsandparse, now I'm not sure...