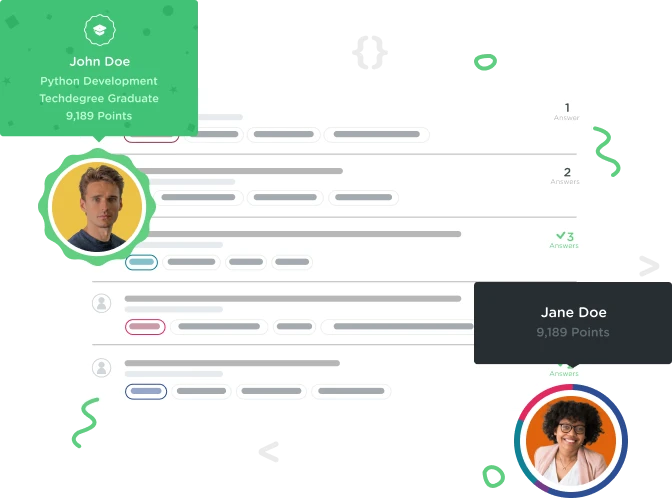

Welcome to the Treehouse Community

Want to collaborate on code errors? Have bugs you need feedback on? Looking for an extra set of eyes on your latest project? Get support with fellow developers, designers, and programmers of all backgrounds and skill levels here with the Treehouse Community! While you're at it, check out some resources Treehouse students have shared here.

Looking to learn something new?

Treehouse offers a seven day free trial for new students. Get access to thousands of hours of content and join thousands of Treehouse students and alumni in the community today.

Start your free trial

Mark Chesney

11,747 Pointssharing my code: web crawling

Hi, here's the code solution he encouraged us to share with the community. Hope it works for everyone!

from urllib.request import urlopen

from bs4 import BeautifulSoup

import re

html = urlopen('https://treehouse-projects.github.io/horse-land/index.html')

soup = BeautifulSoup(html.read(), 'html.parser')

for link in soup.find_all('a'):

print(link.get('href'))

site_links = []

# Here's a function that will receive a website's internal link and parse the html on that page.

def internal_links(linkURL):

html = urlopen('https://treehouse-projects.github.io/horse-land/{}'.format(linkURL))

soup = BeautifulSoup(html, 'html.parser')

return soup.find('a', href=re.compile('(.html)$')) # anchor tags

if __name__ == '__main__':

urls = internal_links("index.html")

while len(urls) > 0:

page = urls.attrs['href']

if page not in site_links:

site_links.append(page)

print(page)

print('\n=============\n')

urls = internal_links(page)

else:

break

1 Answer

Linda Shum

12,609 PointsNice work, I see that your logic made sense. However, I believe that your solution for printing the external links will include 'mustang.html' which is an internal link. This internal link must somehow be ignored if you want to display only the external links.