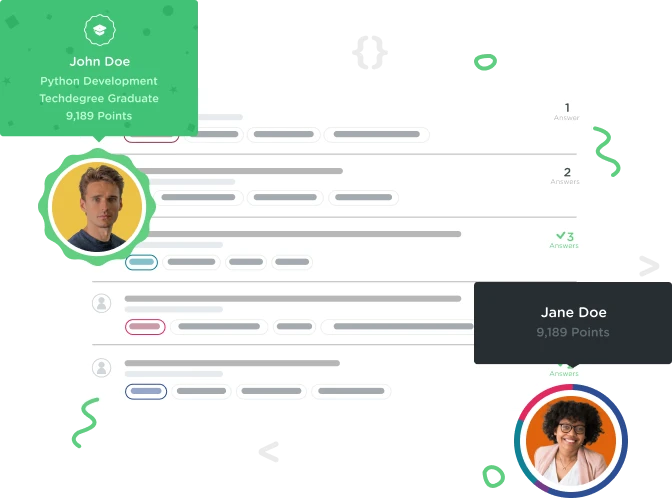

Welcome to the Treehouse Community

Want to collaborate on code errors? Have bugs you need feedback on? Looking for an extra set of eyes on your latest project? Get support with fellow developers, designers, and programmers of all backgrounds and skill levels here with the Treehouse Community! While you're at it, check out some resources Treehouse students have shared here.

Looking to learn something new?

Treehouse offers a seven day free trial for new students. Get access to thousands of hours of content and join thousands of Treehouse students and alumni in the community today.

Start your free trial

brigitte kock

2,054 PointsWhy is this not calculating the correct min() and max() value?

Since the program doesn't show the table output when you make a mistake, I have no clue what I'm doing wrong in the code below:

SELECT MIN(rating) AS "star_min", MAX(rating) AS "star_max" FROM reviews GROUP BY movie_id HAVING movie_id = 6;